Search 14,028 Media Articles

A young African American man, Randal Quran Reid, was pulled over by the state police in Georgia. He was arrested under warrants issued by Louisiana police for two cases of theft in New Orleans. The arrest warrants had been based solely on a facial recognition match, though that was never mentioned in any police document; the warrants claimed "a credible source" had identified Reid as the culprit. The facial recognition match was incorrect and Reid was released. Reid ... is not the only victim of a false facial recognition match. So far all those arrested in the US after a false match have been black. From surveillance to disinformation, we live in a world shaped by AI. The reason that Reid was wrongly incarcerated had less to do with artificial intelligence than with ... the humans that created the software and trained it. Too often when we talk of the "problem" of AI, we remove the human from the picture. We worry AI will "eliminate jobs" and make millions redundant, rather than recognise that the real decisions are made by governments and corporations and the humans that run them. We have come to view the machine as the agent and humans as victims of machine agency. Rather than seeing regulation as a means by which we can collectively shape our relationship to AI, it becomes something that is imposed from the top as a means of protecting humans from machines. It is not AI but our blindness to the way human societies are already deploying machine intelligence for political ends that should most worry us.

Note: For more along these lines, see concise summaries of deeply revealing news articles on police corruption and the disappearance of privacy from reliable major media sources.

American Amara Majeed was accused of terrorism by the Sri Lankan police in 2019. Robert Williams was arrested outside his house in Detroit and detained in jail for 18 hours for allegedly stealing watches in 2020. Randal Reid spent six days in jail in 2022 for supposedly using stolen credit cards in a state he’d never even visited. In all three cases, the authorities had the wrong people. In all three, it was face recognition technology that told them they were right. Law enforcement officers in many U.S. states are not required to reveal that they used face recognition technology to identify suspects. Surveillance is predicated on the idea that people need to be tracked and their movements limited and controlled in a trade-off between privacy and security. The assumption that less privacy leads to more security is built in. That may be the case for some, but not for the people disproportionately targeted by face recognition technology. As of 2019, face recognition technology misidentified Black and Asian people at up to 100 times the rate of white people. In 2018 ... 28 members of the U.S. Congress ... were falsely matched with mug shots on file using Amazon’s Rekognition tool. Much early research into face recognition software was funded by the CIA for the purposes of border surveillance. More recently, private companies have adopted data harvesting techniques, including face recognition, as part of a long practice of leveraging personal data for profit.

Note: For more along these lines, see concise summaries of deeply revealing news articles on police corruption and the disappearance of privacy from reliable major media sources.

OpenAI was created as a non-profit-making charitable trust, the purpose of which was to develop artificial general intelligence, or AGI, which, roughly speaking, is a machine that can accomplish, or surpass, any intellectual task humans can perform. It would do so, however, in an ethical fashion to benefit “humanity as a whole”. Two years ago, a group of OpenAI researchers left to start a new organisation, Anthropic, fearful of the pace of AI development at their old company. One later told a reporter that “there was a 20% chance that a rogue AI would destroy humanity within the next decade”. One may wonder about the psychology of continuing to create machines that one believes may extinguish human life. The problem we face is not that machines may one day exercise power over humans. That is speculation unwarranted by current developments. It is rather that we already live in societies in which power is exercised by a few to the detriment of the majority, and that technology provides a means of consolidating that power. For those who hold social, political and economic power, it makes sense to project problems as technological rather than social and as lying in the future rather than in the present. There are few tools useful to humans that cannot also cause harm. But they rarely cause harm by themselves; they do so, rather, through the ways in which they are exploited by humans, especially those with power.

Note: Read how AI is already being used for war, mass surveillance, and questionable facial recognition technology.

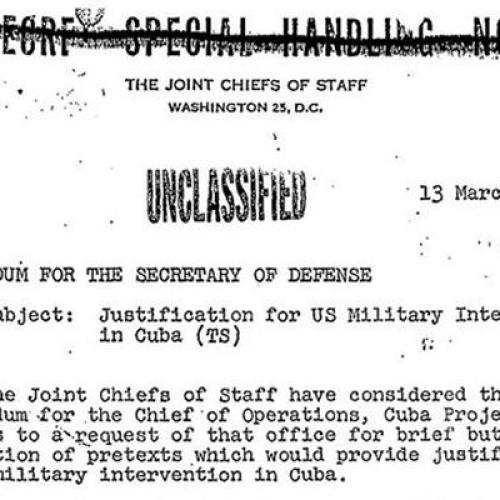

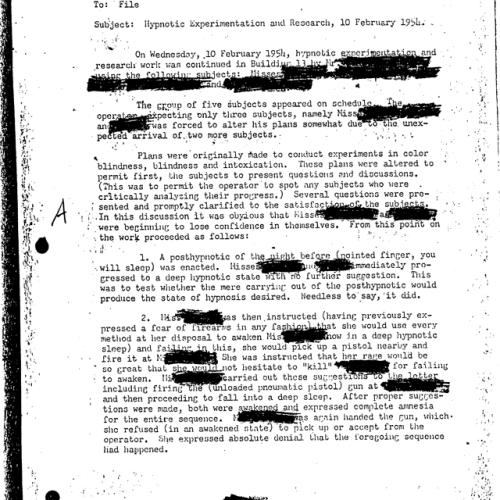

Facial recognition has become a security feature of choice for phones, laptops, passports, and payment apps. Yet it is also, increasingly, a tool of state oppression and corporate surveillance. Immigration and Customs Enforcement and the FBI have deployed the technology as a digital dragnet, searching for suspects among millions of faces in state driver’s license databases, sometimes without first seeking a court order. In early 1963, [Woody Bledsoe] proposed to conduct “a study to determine the feasibility of a simplified facial recognition machine.” A recently declassified history of the CIA’s Office of Research and Development mentions just such a project in 1965; that same year, Woody sent a letter on facial recognition to John W. Kuipers, the division’s chief of analysis. In 1967 ... Woody took on one last assignment that involved recognizing patterns in the human face. The purpose of the experiment was to help law enforcement agencies quickly sift through databases of mug shots and portraits, looking for matches. As before, funding for the project appears to have come from the US government. A 1967 document declassified by the CIA in 2005 mentions an “external contract” for a facial-recognition system that would reduce search time by a hundredfold. Woody’s work set an ethical tone for research on facial recognition that has been enduring and problematic. The potential abuses of facial-recognition technology were apparent almost from its birth.

Note: For more along these lines, see concise summaries of deeply revealing news articles on intelligence agency corruption and the disappearance of privacy from reliable major media sources.

A controversial facial recognition database, used by police departments across the nation, was built in part with 30 billion photos the company scraped from Facebook and other social media users without their permission. The company, Clearview AI, boasts of its potential for identifying rioters at the January 6 attack on the Capitol, saving children being abused or exploited, and helping exonerate people wrongfully accused of crimes. But critics point to privacy violations and wrongful arrests fueled by faulty identifications made by facial recognition, including cases in Detroit and New Orleans, as cause for concern over the technology. Once a photo has been scraped by Clearview AI, biometric face prints are made and cross-referenced in the database, tying the individuals to their social media profiles and other identifying information forever — and people in the photos have little recourse to try to remove themselves. CNN reported Clearview AI last year claimed the company's clients include "more than 3,100 US agencies, including the FBI and Department of Homeland Security." BBC reported Miami Police acknowledged they use the technology for all kinds of crimes, from shoplifting to murder. The risk of being included in what is functionally a "perpetual police line-up" applies to everyone, including people who think they have nothing to hide, [said] Matthew Guariglia, a senior policy analyst for the international non-profit digital rights group Electronic Frontier Fund.

Note: Read about the rising concerns of the use of Clearview AI technology in Ukraine, with claims to help reunite families, identify Russian operatives, and fight misinformation. For more along these lines, see concise summaries of deeply revealing news articles on corporate corruption and the disappearance of privacy from reliable major media sources.

Australia's two most populous states are trialling facial recognition software that lets police check people are home during COVID-19 quarantine, expanding trials that have sparked controversy to the vast majority of the country's population. Little-known tech firm Genvis Pty Ltd said on a website for its software that New South Wales (NSW) and Victoria, home to Sydney, Melbourne and more than half of Australia's 25 million population, were trialling its facial recognition products. The Perth, Western Australia-based startup developed the software in 2020 with WA state police to help enforce pandemic movement restrictions. South Australia state began trialling a similar, non-Genvis technology last month, sparking warnings from privacy advocates around the world about potential surveillance overreach. The involvement of New South Wales and Victoria, which have not disclosed that they are trialling facial recognition technology, may amplify those concerns. Under the system being trialled, people respond to random check-in requests by taking a 'selfie' at their designated home quarantine address. If the software, which also collects location data, does not verify the image against a "facial signature", police may follow up with a visit to the location to confirm the person's whereabouts. While the recognition technology has been used in countries like China, no other democracy has been reported as considering its use in connection with coronavirus containment procedures.

Note: On Sept. 21st, thousands of citizens took to the streets to protest policies like this in Melbourne alone, as shown in this revealing video. The police are responding almost like they are at war, as show in this video. Yet the major media outside of Australia are largely ignoring all of this, while the Australian press is highly biased against the protesters. Are we moving towards a police state? For more along these lines, see concise summaries of deeply revealing news articles on the coronavirus and the disappearance of privacy from reliable major media sources.

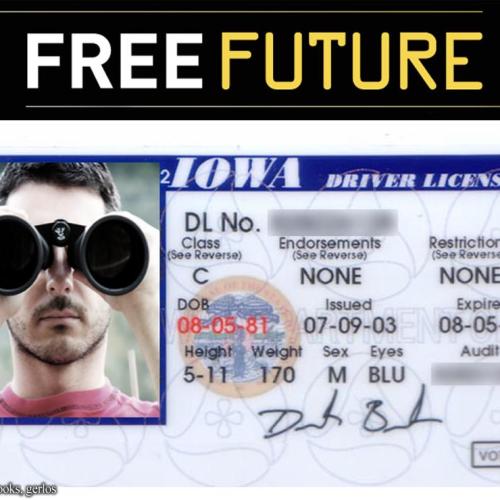

The largest undercover force the world has ever known is the one created by the Pentagon over the past decade. Some 60,000 people now belong to this secret army, many working under masked identities and in low profile, all part of a broad program called "signature reduction." The force, more than ten times the size of the clandestine elements of the CIA, carries out domestic and foreign assignments ... sometimes hiding in private businesses and consultancies. The newest and fastest growing group is the clandestine army that never leaves their keyboards. These are the cutting-edge cyber fighters and intelligence collectors who assume false personas online ... or even engage in campaigns to influence and manipulate social media. Hundreds work in and for the NSA, but over the past five years, every military intelligence and special operations unit has developed some kind of "web" operations cell that both collects intelligence and tends to the operational security of its very activities. No one knows the program's total size, and the explosion of signature reduction has never been examined for its impact on military policies and culture. Congress has never held a hearing on the subject. And yet the military developing this gigantic clandestine force challenges U.S. laws, the Geneva Conventions, the code of military conduct and basic accountability. A major task of signature reduction is keeping all of the organizations and people, even the automobiles and aircraft involved in the clandestine operations, masked. The signature reduction industry also works to figure out ways of spoofing and defeating everything from fingerprinting and facial recognition at border crossings, to ensuring that undercover operatives can enter and operate in the United States, manipulating official records to ensure that false identities match up.

Note: Learn more about mission creep in our comprehensive Military-Intelligence Corruption Information Center. For more, see concise summaries of deeply revealing news articles on corruption in the military and in the intelligence community from reliable major media sources.

When the man from Hangzhou returned home from a business trip, the local police got in touch. They had tracked his car by his license plate in nearby Wenzhou, which has had a spate of coronavirus cases. Stay indoors for two weeks, they requested. After around 12 days, he was bored and went out early. This time, not only did the police contact him, so did his boss. He had been spotted ... by a camera with facial recognition technology, and the authorities had alerted his company as a warning. I was a bit shocked by the ability and efficiency of the mass surveillance network. They can basically trace our movements ... at any time and any place, said the man, who asked not to be identified for fear of repercussions. Chinese have long been aware that they are tracked by the world's most sophisticated system of electronic surveillance. The coronavirus emergency has brought some of that technology out of the shadows, providing the authorities with a justification for sweeping methods of high tech social control. Artificial intelligence and security camera companies boast that their systems can scan the streets for people with even low-grade fevers, recognize their faces even if they are wearing masks and report them to the authorities. If a coronavirus patient boards a train, the railway's "real name" system can provide a list of people sitting nearby. Mobile phone apps can tell users if they have been on a flight or a train with a known coronavirus carrier, and maps can show them ... where infected patients live.

Note: The New York Times strangely removed this article. Yet it is also available here. Is there something they don't want us to know? Read an excellent article showing how this virus scare is being used to test China's intense surveillance technologies in very disturbing ways. For more along these lines, see concise summaries of deeply revealing news articles on government corruption and the disappearance of privacy from reliable major media sources.

The eruption of racist violence in England and Northern Ireland raises urgent questions about the responsibilities of social media companies, and how the police use facial recognition technology. While social media isn’t the root of these riots, it has allowed inflammatory content to spread like wildfire and helped rioters coordinate. The great elephant in the room is the wealth, power and arrogance of the big tech emperors. Silicon Valley billionaires are richer than many countries. That mature modern states should allow them unfettered freedom to regulate the content they monetise is a gross abdication of duty, given their vast financial interest in monetising insecurity and division. In recent years, [facial recognition] has been used on our streets without any significant public debate. We wouldn’t dream of allowing telephone taps, DNA retention or even stop and search and arrest powers to be so unregulated by the law, yet this is precisely what has happened with facial recognition. Our facial images are gathered en masse via CCTV cameras, the passport database and the internet. At no point were we asked about this. Individual police forces have entered into direct contracts with private companies of their choosing, making opaque arrangements to trade our highly sensitive personal data with private companies that use it to develop proprietary technology. There is no specific law governing how the police, or private companies ... are authorised to use this technology. Experts at Big Brother Watch believe the inaccuracy rate for live facial recognition since the police began using it is around 74%, and there are many cases pending about false positive IDs.

Note: Many US states are not required to reveal that they used face recognition technology to identify suspects, even though misidentification is a common occurrence. For more along these lines, see concise summaries of deeply revealing news articles on Big Tech and the disappearance of privacy from reliable major media sources.

The Covid-19 pandemic is now giving Russian authorities an opportunity to test new powers and technology, and the country's privacy and free-speech advocates worry the government is building sweeping new surveillance capabilities. Perhaps the most well-publicized tech tool in Russia's arsenal for fighting coronavirus is Moscow's massive facial-recognition system. Rolled out earlier this year, the surveillance system had originally prompted an unusual public backlash, with privacy advocates filing lawsuits over unlawful surveillance. Coronavirus, however, has given an unexpected public-relations boost to the system. Last week, Moscow police claimed to have caught and fined 200 people who violated quarantine and self-isolation using facial recognition and a 170,000-camera system. Some of the alleged violators who were fined had been outside for less than half a minute before they were picked up by a camera. And then there's the use of geolocation to track coronavirus carriers. Prime Minister Mikhail Mishustin earlier this week ordered Russia's Ministry of Communications to roll out a tracking system based on "the geolocation data from the mobile providers for a specific person" by the end of this week. According to a description in the government decree, information gathered under the tracking system will be used to send texts to those who have come into contact with a coronavirus carrier, and to notify regional authorities so they can put individuals into quarantine.

Note: For more along these lines, see concise summaries of deeply revealing news articles on the coronavirus pandemic and the disappearance of privacy from reliable major media sources.

Like the 9/11 terrorist attacks in the U.S., the coronavirus pandemic is a crisis of such magnitude that it threatens to change the world in which we live, with ramifications for how leaders govern. Governments are locking down cities with the help of the army, mapping population flows via smartphones and jailing or sequestering quarantine breakers using banks of CCTV and facial recognition cameras backed by artificial intelligence. The restrictions are unprecedented in peacetime and made possible only by rapid advances in technology. And while citizens across the globe may be willing to sacrifice civil liberties temporarily, history shows that emergency powers can be hard to relinquish. A primary concern is that if the public gives governments new surveillance powers to contain Covid-19, then governments will keep these powers after the public health crisis ends, said Adam Schwartz ... at the non-profit Electronic Frontier Foundation. Nearly two decades after the 9/11 attacks, the U.S. government still uses many of the surveillance technologies it developed in the immediate wake. In part, the Chinese Communist Partys containment measures at the virus epicenter in Wuhan set the tone, with what initially seemed shocking steps to isolate the infected being subsequently adopted in countries with no comparable history of Chinas state controls. For Gu Su ... at Nanjing University, Chinas political culture made its people more amenable to the draconian measures.

Note: For more along these lines, see concise summaries of deeply revealing news articles on the coronavirus and the disappearance of privacy from reliable major media sources.

Mr. Ton-That an Australian techie and onetime model did something momentous: He invented a tool that could end your ability to walk down the street anonymously. His tiny company, Clearview AI, devised a groundbreaking facial recognition app. You take a picture of a person, upload it and get to see public photos of that person, along with links to where those photos appeared. The system whose backbone is a database of more than three billion images that Clearview claims to have scraped from Facebook, YouTube, Venmo and millions of other websites goes far beyond anything ever constructed by the United States government or Silicon Valley giants. Without public scrutiny, more than 600 law enforcement agencies have started using Clearview in the past year. The computer code underlying its app ... includes programming language to pair it with augmented-reality glasses; users would potentially be able to identify every person they saw. The tool could identify activists at a protest or an attractive stranger on the subway, revealing not just their names but where they lived, what they did and whom they knew. And its not just law enforcement: Clearview has also licensed the app to at least a handful of companies for security purposes. Because the police upload photos of people theyre trying to identify, Clearview possesses a growing database of individuals who have attracted attention from law enforcement. The company also has the ability to manipulate the results that the police see.

Note: For lots more on this disturbing new technology, read one writer's personal experience with it. For more along these lines, see concise summaries of deeply revealing news articles on the disappearance of privacy from reliable major media sources.

China’s ambition to collect a staggering amount of personal data from everyday citizens is more expansive than previously known. Phone-tracking devices are now everywhere. The police are creating some of the largest DNA databases in the world. And the authorities are building upon facial recognition technology to collect voice prints from the general public. The Times’ Visual Investigations team and reporters in Asia spent over a year analyzing more than a hundred thousand government bidding documents. The Chinese government’s goal is clear: designing a system to maximize what the state can find out about a person’s identity, activities and social connections. In a number of the bidding documents, the police said that they wanted to place cameras where people go to fulfill their common needs — like eating, traveling, shopping and entertainment. The police also wanted to install facial recognition cameras inside private spaces, like residential buildings, karaoke lounges and hotels. Authorities are using phone trackers to link people’s digital lives to their physical movements. Devices known as WiFi sniffers and IMSI catchers can glean information from phones in their vicinity. DNA, iris scan samples and voice prints are being collected indiscriminately from people with no connection to crime. The government wants to connect all of these data points to build comprehensive profiles for citizens — which are accessible throughout the government.

Note: For more on this disturbing topic, see the New York Times article “How China is Policing the Future.” For more along these lines, see concise summaries of deeply revealing news articles on government corruption and the disappearance of privacy from reliable major media sources.

A growing number of supermarkets in Alabama, Oklahoma, and Texas are selling bullets by way of AI-powered vending machines, as first reported by Alabama's Tuscaloosa Thread. The company behind the machines, a Texas-based venture dubbed American Rounds, claims on its website that its dystopian bullet kiosks are outfitted with "built-in AI technology" and "facial recognition software," which allegedly allow the devices to "meticulously verify the identity and age of each buyer." As showcased in a promotional video, using one is an astoundingly simple process: walk up to the kiosk, provide identification, and let a camera scan your face. If its embedded facial recognition tech says you are in fact who you say you are, the automated machine coughs up some bullets. According to American Rounds, the main objective is convenience. Its machines are accessible "24/7," its website reads, "ensuring that you can buy ammunition on your own schedule, free from the constraints of store hours and long lines." Though officials in Tuscaloosa, where two machines have been installed, [said] that the devices are in full compliance with the Bureau of Alcohol, Tobacco, Firearms and Explosives' standards ... at least one of the devices has been taken down amid a Tuscaloosa city council investigation into its legal standing. "We have over 200 store requests for AARM [Automated Ammo Retail Machine] units covering approximately nine states currently," [American Rounds CEO Grant Magers] told Newsweek, "and that number is growing daily."

Note: Facial recognition technology is far from reliable. For more along these lines, see concise summaries of deeply revealing news articles on artificial intelligence from reliable major media sources.

Police in the U.S. recently combined two existing dystopian technologies in a brand new way to violate civil liberties. A police force in California recently employed the new practice of taking a DNA sample from a crime scene, running this through a service provided by US company Parabon NanoLabs that guesses what the perpetrators face looked like, and plugging this rendered image into face recognition software to build a suspect list. Parabon NanoLabs ... alleges it can create an image of the suspect’s face from their DNA. Parabon NanoLabs claim to have built this system by training machine learning models on the DNA data of thousands of volunteers with 3D scans of their faces. The process is yet to be independently audited, and scientists have affirmed that predicting face shapes—particularly from DNA samples—is not possible. But this has not stopped law enforcement officers from seeking to use it, or from running these fabricated images through face recognition software. Simply put: police are using DNA to create a hypothetical and not at all accurate face, then using that face as a clue on which to base investigations into crimes. This ... threatens the rights, freedom, or even the life of whoever is unlucky enough to look a little bit like that artificial face. These technologies, and their reckless use by police forces, are an inherent threat to our individual privacy, free expression, information security, and social justice.

Note: Law enforcement officers in many U.S. states are not required to reveal that they used face recognition technology to identify suspects. For more along these lines, see concise summaries of important news articles on police corruption and the erosion of civil liberties from reliable major media sources.

Weapons-grade robots and drones being utilized in combat isn't new. But AI software is, and it's enhancing – in some cases, to the extreme – the existing hardware, which has been modernizing warfare for the better part of a decade. Now, experts say, developments in AI have pushed us to a point where global forces now have no choice but to rethink military strategy – from the ground up. "It's realistic to expect that AI will be piloting an F-16 and will not be that far out," Nathan Michael, Chief Technology Officer of Shield AI, a company whose mission is "building the world's best AI pilot," says. We don't truly comprehend what we're creating. There are also fears that a comfortable reliance in the technology's precision and accuracy – referred to as automation bias – may come back to haunt, should the tech fail in a life or death situation. One major worry revolves around AI facial recognition software being used to enhance an autonomous robot or drone during a firefight. Right now, a human being behind the controls has to pull the proverbial trigger. Should that be taken away, militants could be misconstrued for civilians or allies at the hands of a machine. And remember when the fear of our most powerful weapons being turned against us was just something you saw in futuristic action movies? With AI, that's very possible. "There is a concern over cybersecurity in AI and the ability of either foreign governments or an independent actors to take over crucial elements of the military," [filmmaker Jesse Sweet] said.

Note: For more along these lines, see concise summaries of deeply revealing news articles on military corruption from reliable major media sources.

The US government’s new mobile app for migrants to apply for asylum at the US-Mexico border is blocking many Black people from being able to file their claims because of facial recognition bias in the tech, immigration advocates say. The app, CBP One, is failing to register many people with darker skin tones, effectively barring them from their right to request entry into the US. People who have made their way to the south-west border from Haiti and African countries, in particular, are falling victim to apparent algorithm bias in the technology that the app relies on. The government announced in early January that the new CBP One mobile app would be the only way migrants arriving at the border can apply for asylum and exemption from Title 42 restrictions. Racial bias in face recognition technology has long been a problem. Increasingly used by law enforcement and government agencies to fill databases with biometric information including fingerprints and iris scans, a 2020 report by Harvard University called it the “least accurate” identifier, especially among darker-skinned women with whom the error rate is higher than 30%. Emmanuella Camille, a staff attorney with the Haitian Bridge Alliance ... said the CBP One app has helped “lighter-skin toned people from other nations” obtain their asylum appointments “but not Haitians” and other Black applicants. Besides the face recognition technology not registering them ... many asylum seekers have outdated cellphones – if they have cellphones at all – that don’t support the CBP One app.

Note: For more along these lines, see concise summaries of deeply revealing news articles on government corruption and the erosion of civil liberties from reliable major media sources.

Britain's surveillance agency GCHQ, with aid from the US National Security Agency, intercepted and stored the webcam images of millions of internet users not suspected of wrongdoing, secret documents reveal. GCHQ files dating between 2008 and 2010 explicitly state that a surveillance program codenamed Optic Nerve collected still images of Yahoo webcam chats in bulk and saved them to agency databases, regardless of whether individual users were an intelligence target or not. In one six-month period in 2008 alone, the agency collected webcam imagery including substantial quantities of sexually explicit communications from more than 1.8 million Yahoo user accounts globally. Yahoo ... denied any prior knowledge of the program, accusing the agencies of "a whole new level of violation of our users' privacy". Optic Nerve, the documents provided by NSA whistleblower Edward Snowden show, began as a prototype in 2008 and was still active in 2012. The system, eerily reminiscent of the telescreens evoked in George Orwell's Nineteen Eighty-Four, was used for experiments in automated facial recognition, to monitor GCHQ's existing targets, and to discover new targets of interest. Such searches could be used to try to find terror suspects or criminals making use of multiple, anonymous user IDs. Rather than collecting webcam chats in their entirety, the program saved one image every five minutes from the users' feeds ... to avoid overloading GCHQ's servers. The documents describe these users as "unselected" intelligence agency parlance for bulk rather than targeted collection.

Note: For more on government surveillance, see the deeply revealing reports from reliable major media sources available here.

Over the last two months, Chinese citizens have had to adjust to a new level of government intrusion. Getting into ones apartment compound or workplace requires scanning a QR code, writing down ones name and ID number, temperature and recent travel history. Telecom operators track peoples movements while social media platforms like WeChat and Weibo have hotlines for people to report others who may be sick. Some cities are offering people rewards for informing on sick neighbours. Chinese companies are meanwhile rolling out facial recognition technology that can detect elevated temperatures in a crowd or flag citizens not wearing a face mask. A range of apps use the personal health information of citizens to alert others of their proximity to infected patients. Experts say the virus ... has given authorities a pretext for accelerating the mass collection of personal data to track citizens. Its mission creep, said Maya Wang, senior China researcher for Human Rights Watch. According to Wang, the virus is likely to be a catalyst for a further expansion of the surveillance regime. Citizens are particularly critical of a system called Health Code, which users can sign up for through Alipay or WeChat, that assigns individuals one of three colour codes based on their travel history, time spent in outbreak hotspots and exposure to potential carriers of the virus. The software, used in more than 100 cities, will soon allow people to check the colours of other residents when their ID numbers are entered.

Note: Learn in this New York Times article how everyone in China is given a red, yellow, or green code which determines how free they are to move about and even enter businesses. This article shows how foreigners are being stopped instantly from making live podcasts from China using facial recognition technology. For more along these lines, see concise summaries of deeply revealing news articles on the coronavirus and the disappearance of privacy from reliable major media sources.

Surveillance technologies have evolved at a rapid clip over the last two decades — as has the government’s willingness to use them in ways that are genuinely incompatible with a free society. The intelligence failures that allowed for the attacks on September 11 poured the concrete of the surveillance state foundation. The gradual but dramatic construction of this surveillance state is something that Republicans and Democrats alike are responsible for. Our country cannot build and expand a surveillance superstructure and expect that it will not be turned against the people it is meant to protect. The data that’s being collected reflect intimate details about our closely held beliefs, our biology and health, daily activities, physical location, movement patterns, and more. Facial recognition, DNA collection, and location tracking represent three of the most pressing areas of concern and are ripe for exploitation. Data brokers can use tens of thousands of data points to develop a detailed dossier on you that they can sell to the government (and others). Essentially, the data broker loophole allows a law enforcement agency or other government agency such as the NSA or Department of Defense to give a third party data broker money to hand over the data from your phone — rather than get a warrant. When pressed by the intelligence community and administration, policymakers on both sides of the aisle failed to draw upon the lessons of history.

Note: For more along these lines, see concise summaries of deeply revealing news articles on government corruption and the disappearance of privacy from reliable major media sources.