Warfare Technology News Stories

The DoD has ambitious plans for full spectrum dominance, seeking control over all potential battlespaces: land, ocean, air, outerspace, and cyberspace. Artificial intelligence and other emerging technologies are being used to further these agendas, reshaping the military and geopolitical landscape in unprecedented ways.

In our news archive below, we examine how emerging warfare technology undermines national security, fuels terrorism, and causes devastating civilian casualties.

Related: Weapons of Mass Destruction, Biotech Dangers, Non-Lethal Weapons

[Veteran journalist Katrina Manson's] new book, “Project Maven: A Marine Colonel, His Team, and the Dawn of AI Warfare,” is an ... account of the ongoing reconfiguration of the U.S. armed forces for a new technological era. “Project Maven” is structured as an intellectual and professional biography of Drew Cukor, a Marine Corps intelligence officer largely responsible for ... this military transformation. Cukor insists that Maven was never supposed to be a weapon. He frequently defends the project as nothing more than an integrated data platform ... for a world made better and safer by A.I. warfare. In 2018, Google employees staged a massive walkout to protest the company’s work on a primitive iteration of the project. In the aftermath of the Google fiasco, Cukor turns to Palantir (in addition to Microsoft and Amazon) to make Maven a reality. NATO now has its own Maven contract with Palantir, and that prompted ten member nations to pursue one, too. The Maven Smart System has become a global surveillance apparatus—it can keep track of forty-nine thousand airfields all over the world—but its current work is hardly limited to intelligence provision and analysis. A “single click,” [journalist Katrina] Manson reports, “could send coordinates through a tactical data link to a specific weapons platform so that it could fire at the target.” The entire process, from target identification to target destruction, is four clicks. Officials told Manson that Maven was “accelerating operations and ‘enabling lethality’ at combat headquarters around the world.” Maven is only one part of the A.I. tool kit. Manson uncovers evidence of two clandestine killer-robot programs, one aerial and the other aquatic, which are being developed in haste. For the first time, the Pentagon’s proposed budget contained a line item for comprehensively self-directing systems. A machine can shoot, Manson reports, up to “ten times faster than an assassin.”

Note: For more, read our concise summaries of news articles on AI and military corruption.

One of the most intriguing secrets of Operation Epic Fury is how, using an “exquisite” piece of classified technology, the CIA succeeded in finding the injured airman in Iran by detecting his heartbeat, the tiniest evidence of human life concealed in a narrow crevice up a 7,000ft mountain ridge. The technology that led to the airman’s rescue by Seal Team Six commandos has been outed as a CIA “tool” called Ghost Murmur. It was reportedly developed as a highly classified “blue skies” invention by Lockheed Martin’s Skunk Works, the famous laboratory where young, brilliant scientists and engineers devote their time to finding solutions to impossible concepts. John Ratcliffe, the CIA director, hinted at the new technology in a press conference this week. “We deployed both human assets and exquisite technologies that no other intelligence service in the world possess to a daunting challenge, comparable to hunting for a single grain of sand in the middle of a desert,” Ratcliffe said. On the face of it, a futuristic magnetic sensing device ... pinpointed the missing colonel’s heartbeat across a 40-mile stretch of land. Ghost Murmur, as described, would appear to push the boundaries of physics beyond even the most exceptional human brain or computer. Intelligence sources would not confirm or deny the existence of Ghost Murmur. But reportedly the “CIA tool” relies on what is called quantum magnetometry, which can find signals of human hearts, aided by artificial intelligence to separate out all the other noises getting in the way.

Note: While it's unclear whether the Ghost Murmur tool was actually responsible for rescuing the injured soldier, this technology is not out of the realm of possibility. Since the 1960s, the CIA had already developed poison weapons capable of causing heart attacks remotely. Learn more about real-life exotic weapon technologies used by militaries around the world. For more along these lines, read our concise summaries of news articles on AI and intelligence agency corruption.

Palantir (PLTR)’s Maven artificial intelligence system will become an official program of record, Deputy Secretary of Defense Steve Feinberg said in a letter to Pentagon leaders, a move that locks in long-term use of Palantir’s weapons-targeting technology across the U.S. military. Maven is a command-and-control software platform that analyzes battlefield data and identifies targets. It is already the primary AI operating system for the U.S. military, which has carried out thousands of targeted strikes against Iran over the last three weeks. Designating Maven as a program of record will streamline its adoption across all arms of the military. The memo ordered oversight of Maven be moved from the National Geospatial Intelligence Agency to the Pentagon’s Chief Digital Artificial Intelligence Office within 30 days. Future contracting with Palantir will be handled by the Army, the letter said. Feinberg’s order is a significant win for Palantir, which has landed a growing stream of contracts with the U.S. government, including a deal announced last summer with the U.S. Army worth up to $10 billion. Those awards have helped double the company’s stock price in the past year, lifting its market value to nearly $360 billion. Maven can rapidly analyze huge amounts of data from satellites, drones, radars, sensors and intelligence reports, and use AI to automatically identify potential threats or targets.

Note: For more along these lines, read our concise summaries of news articles on AI and military corruption.

Laser guns are real now. Actual militaries are deploying actual lasers in actual combat. “This is a technology that has been under development for decades,” [said] Iain Boyd, an aerospace engineer. “And it’s only really now just really starting to enter the public view.” The Army has outfitted trucks with anti-drone lasers, and the Air Force has added ground-based lasers to its arsenal. Russia, China, and the United Kingdom are all developing—and in some cases already deploying—laser weapons, and last year, Israel became the first country to use a laser in combat to destroy a drone. The very real lasers now being deployed on battlefields around the world have some notable differences from most of their science-fictional forebears. They’re silent, for one thing—no pew pew sound effects—and the beam they produce is invisible. Real lasers have a number of other advantages. $13 a shot is pretty good compared with the Navy’s standard missile interceptors, which cost $2 million apiece. Another advantage of lasers is that they just keep going. Last year, Chinese scientists successfully beamed a precision non-weapon laser all the way to the moon. But infinite range is also a drawback. If a laser missed a drone, Boyd said, the beam could continue for hundreds of miles and hit, say, a commercial airliner. Even if a laser beam did hit its target, Boyd said, its light could still scatter and cause all manner of collateral damage.

Note: For more, read our concise summaries of news articles on warfare technologies.

The Department of Defense has quietly signed a $210 million deal to buy advanced cluster shells from one of Israel’s state-owned arms companies, marking unusually large new commitments to a class of weapons and an Israeli defense establishment both widely condemned for their indiscriminate killing of civilians. The deal, signed in September and not previously reported, is the department’s largest contract to purchase weapons from an Israeli company in available records. The shells are designed to replace decades-old and often defective cluster shells that left live explosives scattered across Vietnam, Laos, Iraq, and other nations. The terror of cluster weapons persists long after the guns that fired them have quieted, as civilians return to fields, forests, and settlements laced with bomblets that can explode years later without warning. “The footprint of the injuries of these weapons is so horrifying,” said Alma Taslidžan, advocacy manager for the aid organization Humanity & Inclusion. The Cluster Munition Monitor has documented more than 24,800 cluster munition injuries and deaths since the 1960s, three-quarters from unexploded remnants. In 2024, cluster munitions killed at least 314 civilians, the majority of them in Ukraine. Major military powers — like Russia, China, Israel, India, Pakistan, and the United States — have never signed the Convention on Cluster Munitions, which bans its 112 member states from using or producing those weapons.

Note: American cluster bombs kill countless civilians in countries like Yemen while the world's biggest banks profit from the weapons trade. For more along these lines, read our concise summaries of news articles on war and military corruption.

The AI surveillance platform provider Palantir is no stranger to controversy. It brings in billions each year from controversial partnerships with groups like Immigration and Customs Enforcement (ICE) and the Israeli Defense Forces, something CEO Alex Karp isn’t keen on changing anytime soon. In an interview ... this week, Karp even took it a step further, arguing that legalizing US war crimes would open up a whole new market for Palantir. Unlike other moguls profiting off the military industrial complex who hide behind concepts like “democracy” and “national security,” the Palantir CEO isn’t afraid to put his mouth where his money is with disarmingly bombastic language. In a letter to shareholders earlier this year, for instance, Karp quoted hawkish political scholar Samuel Huntington in arguing that the “rise of the West was not made possible ‘by the superiority of its ideas or values or religion… but rather by its superiority in applying organized violence.'” While this could be seen as a damning indictment of Western civilization and its violent stranglehold over the world economy, Karp instead positions it as a source of inspiration. In another part of his interview ... the Palantir CEO reaffirmed his commitment to ICE, emphasizing the important role he plays in making immigrants lives worse. “I’m going to use my whole influence to make sure this country stays skeptical on migration and has a deterrent capacity that it only uses selectively,” Karp said.

Note: Listen to an audio clip of Jeffrey Epstein promoting Palantir to Ehud Barak. Read how Palantir helped the NSA spy on the entire planet. For more along these lines, read our concise summaries of news articles on Big Tech and the disappearance of privacy.

Newly surfaced audio recordings appear to capture Jeffrey Epstein advising former Israeli prime minister Ehud Barak on the tech company Palantir, raising fresh questions about Epstein's global intelligence connections and influence. The recordings feature Epstein attempting to introduce Barak to Peter Thiel, Palantir's billionaire co-founder, describing the company and its potential board positions. Epstein's pitch highlighted the influence of major venture capital firms, including Andreessen Horowitz, positioning himself as the connector for powerful business and intelligence networks. Barak, a former head of Israeli military intelligence, later founded a security company reportedly invested in by Epstein, further fueling speculation about Epstein's ties to intelligence operations. Analysts note that Epstein's discussions with Barak blend technology advisory with geopolitical reach, suggesting that Palantir's capabilities may have been leveraged as part of broader influence operations. Previously released documents indicate he filed Freedom of Information Act (FOIA) requests with the CIA in 1999 and again in 2011, seemingly seeking acknowledgement of past affiliations. Additional FBI reports, now partially confirmed by the recordings, describe Epstein as a confidential human source with ties to both US and allied intelligence services. Conversations with Alan Dershowitz reportedly involved debriefs with Mossad.

Note: Read our latest in-depth Epstein files investigation, titled "Beyond Sex Trafficking—Zorro Ranch and a Darker Scientific Agenda." Listen to the audio clip of Jeffrey Epstein promoting Palantir to Ehud Barak. Read how Palantir helped the NSA spy on the entire planet. For more along these lines, read our concise summaries of news articles on Jeffrey Epstein's crime ring and intelligence agency corruption.

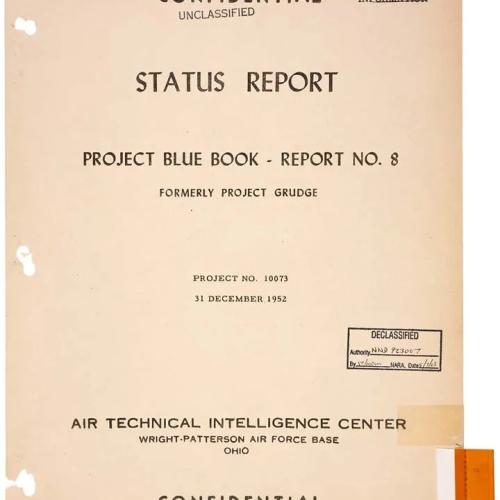

A mysterious UFO has been allegedly stored at a little-known US Navy base on the East Coast for decades as the military continues to reverse-engineer its secrets. A new report has claimed that Naval Air Station Patuxent River in Maryland, better known as Pax River, has kept an 'exotic vehicle of unknown origin' secretly housed there, possibly since the 1950s. According to anonymous sources tied to Naval Air Systems Command (NAVAIR), which is headquartered at Pax River, certain military programs at the base have been involved in analyzing and exploiting technology recovered from non-human craft for years. UFO whistleblower Luis Elizondo stated in written testimony to Congress that a specially built hangar was constructed at Pax River specifically for the transfer of extraterrestrial technology. Under oath, Elizondo described a plan where this hangar would help major defense contractor Lockheed Martin move non-human technology to another company called Bigelow Aerospace for further study and analysis. Last year, Dr Hal Puthoff, a physicist and electrical engineer who worked on the government's psychic spy and UFO research programs, revealed on the Joe Rogan Experience podcast that the US military has recovered more than 10 spacecraft since the infamous Roswell incident. Puthoff claimed that some of these craft were actually fully intact craft that had been 'gifted' to humans by extraterrestrials.

Note: Our 26-minute video UFO Disclosure: Breakthrough Technology and Awakening Human Consciousness features interviews with leading experts along with well-sourced, verifiable information to help you make sense of this fascinating issue and its immense potential to transform our world. For more, explore the comprehensive resources provided in our UFO Information Center.

“We had no way to compete with their technology, with their weapons. I swear, I’ve never seen anything like it,” a Venezuelan security guard says in a video widely shared on social media and promoted by the White House. His account tells how U.S. special forces in Venezuela captured then-President Maduro using new technology which incapacitated the entire protective team and allowed two dozen U.S. troops to easily defeat hundreds of defenders. Guard: "At one point, they launched something—I don't know how to describe it... it was like a very intense sound wave. Suddenly I felt like my head was exploding from the inside. We all started bleeding from the nose. Some were vomiting blood. We fell to the ground, unable to move." In the 90’s and early 2000s, the Pentagon poured resources into the Joint Non-Lethal Weapons Directorate, now rebranded the Joint Intermedia Force Capabilities Office. Their task was to develop non-lethal, or less-lethal weapons which ... would disable or incapacitate people. The Pentagon worked on a wide variety of concepts, including strobe dazzlers, malodorants and electroshock projectiles. One of the biggest was the millimeter-wave Active Denial System or ‘pain beam’ which could inflict severe pain and drive back rioters from several hundred meters away. A patented device known as Electromagnetic Personnel Interdiction Control (EPIC) ... uses radio waves “to excite and interrupt the normal process of human hearing and equilibrium.”

Note: Acoustic or sonic weapons can vibrate the insides of humans to stun them, nauseate them, or even "liquefy their bowels and reduce them to quivering diarrheic messes," according to a Pentagon briefing. These devices can also cause excruciating pain, with some able to heat up skin from a distance and others that can beam sound into the skull of a human. Learn more about non-lethal weapons in our comprehensive Military-Intelligence Corruption Information Center.

The Defense Department has spent more than a year testing a device purchased in an undercover operation that some investigators think could be the cause of a series of mysterious ailments impacting US spies, diplomats and troops that are colloquially known as Havana Syndrome. A division of the Department of Homeland Security, Homeland Security Investigations, purchased the device for millions of dollars in the waning days of the Biden administration, using funding provided by the Defense Department, according to two ... sources. Officials paid “eight figures” for the device, these people said, declining to offer a more specific number. The device is still being studied and there is ongoing debate ... over its link to the roughly dozens of anomalous health incidents that remain officially unexplained. The device acquired by HSI produces pulsed radio waves, one of the sources said, which some officials and academics have speculated for years could be the cause of the incidents. Although the device is not entirely Russian in origin, it contains Russian components. The device could fit in a backpack. Havana Syndrome, known officially as “anomalous health episodes” ... first emerged in late 2016, when a cluster of US diplomats stationed in the Cuban capital of Havana began reporting symptoms consistent with head trauma, including vertigo and extreme headaches. In subsequent years, there have been cases reported around the world.

Note: Read more about Havana Syndrome. For more along these lines, read our concise summaries of news articles on intelligence agency corruption.

On Thursday, lawmakers in the House approved a “pilot program” in the pending Pentagon budget bill that could eventually open the door to sending billions to big contractors, while providing what critics say would be little benefit to the military. The provision, which appeared in the budget bill after a closed-door session overseen by top lawmakers, would allow contractors to claim reimbursement for the interest they pay on debt they take on to build weapons and other gadgets for the armed services. One big defense contractor alone, Lockheed Martin, reported having more than $17.8 billion in outstanding interest payments last year, said Julia Gledhill, an analyst at the nonprofit Stimson Center. “The fact that we are even exploring this question is a little crazy in terms of financial risk for the government,” Gledhill said. Gledhill said even some Capitol Hill staffers were “scandalized” to see the provision in the final bill, which will likely be approved by the Senate. The switch to covering financing costs seems to be in line with a larger push this year to shake up the defense industry. The Pentagon itself was dubious in a 2023 study conducted by the Office of the Under Secretary of Defense for Acquisition and Sustainment. The Pentagon found that policy change might even supercharge the phenomenon of big defense contractors using taxpayer dollars for stock buybacks instead of research and development.

Note: Read our concise summaries of news articles on government corruption.

The American weapons maker Anduril ... is partnering with EDGE Group, a weapons conglomerate controlled by the United Arab Emirates, a nation run entirely by the royal families of its seven emirates that permits virtually none of the activities typically associated with democratic societies. In the UAE, free expression and association are outlawed, and dissident speech is routinely and brutally punished without due process. A 2024 assessment of political rights and civil liberties by Freedom House, a U.S. State Department-backed think tank, gave the UAE a score of 18 out of 100. The EDGE–Anduril Production Alliance, as it will be known, will focus on autonomous weapons systems, including the production of Anduril’s “Omen” drone. The UAE has agreed to purchase the first 50 Omen drones built through the partnership. EDGE Chair Faisal Al Bannai explained in a 2019 interview that EDGE was working to develop weapons systems tailored to defeating low-tech “militia-style” militant groups. Nathaniel Raymonds, who leads the Humanitarian Research Lab at the Yale School of Public Health ... argued that “not since Operation Cyclone,” the CIA effort to arm the Afghan mujahideen, “has there been a covert action by any nation state to arm a paramilitary proxy group at this scale and sophistication and try to write it off as just a series of happy coincidences.”

Note: For more, read our concise summaries of news articles on war.

By leveraging the dual-use nature of many of their products, where defense technologies can be integrated into the commercial sector and vice versa, Pentagon contractors like Palantir, Skydio, and General Atomics have gained ground at home for surveillance technologies — especially drones — proliferating war-tested military tech within the domestic sphere. Palantir’s Gotham platform was initially promoted as intelligence software for defense and counter-terrorism purposes. Now adopted among U.S. law enforcement, hundreds of police departments can use Gotham to analyze data on civilians’ whereabouts. Palantir has gone on to sell similar software to other government agencies, obtaining a $30 million ICE contract this spring to help the agency track undocumented immigrants. L3Harris Stingrays, or cell site simulators, are sophisticated phone trackers originally designed for military use. Police departments subsequently adopted these systems to track and collect information on crime suspects. Defense contractors are similarly leveraging their battle-tested drones to capitalize on a booming domestic market. The broader public safety drone market is expected to nearly triple within the next 10 years. The DRONE Act, meanwhile, included in the FY2026 National Defense Authorization Act, would let police purchase and operate the systems with federal grants, thus flooding drone procurement processes with more federal funds.

Note: For more along these lines, read our concise summaries of news articles on Big Tech and police corruption.

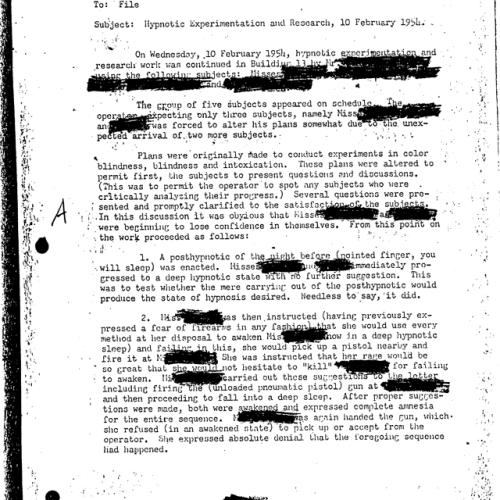

During the Cold War, the first implants showing that we could control animal minds sparked panic. The C.I.A. had its own clandestine experimental mind-control program. People warned of brain warfare. Those fears [are] back, along with a conversation about what it means to have freedom of thought at a time when technology is literally being implanted in our brains. Brain computer interface, or B.C.I. ... are very small devices that go right on the surface of your brain, where they can pick up neural activity. The data is transmitted via Bluetooth to a computer program, which decodes the information. In a sense, they’re hooked up to an artificial intelligence. So the neural network inside your mind communicates with a neural network outside. And through that, we are able to reconstruct people’s intentions. For people with degenerative diseases, or who are paralyzed, or who otherwise have lost important abilities, these implants have been totally revolutionary. These patients can move their hands, type and in some cases, speak again. Optogenetics, a technique for turning isolated neurons on and off, has been used to implant false memories in mice, raising the possibility that, in the distant future, something similar could be done in humans. Neuroprivacy is the idea that we should have to give consent to anyone who wants access to our innermost selves. But there’s a question: Does neuroprivacy apply only to my unspoken thoughts? Or does it apply to the electrical activity in my brain?

Note: Read about the Pentagon's plans to use our brains as warfare, describing how the human body is war's next domain. Learn more about biotech dangers. For more along these lines, read our concise summaries of news articles on Big Tech and microchip implants.

November 30 marks the International Day of Remembrance for all Victims of Chemical Warfare. Between 1961 and 1971, the U.S. military sprayed an estimated 20 million gallons of herbicides over southern Vietnam, along the Ho Chi Minh Trail in Laos, and parts of Cambodia. Nearly two-thirds was Agent Orange, later discovered to be contaminated with 2,3,7,8-Tetrachlorodibenzo-p-dioxin (TCDD) — a potent, long-lasting dioxin. TCDD is a known human carcinogen and an endocrine disruptor, linked to cancers, reproductive disorders, and birth defects that can span generations. By the letter of the CWC, Agent Orange is not classified as a “chemical weapon.” If you ask a Vietnam veteran suffering from Parkinson’s, cancer, heart disease, or any of the 19 types of conditions the Department of Veterans Affairs (VA) associates with Agent Orange exposure, you’ll hear a very different story. To them, it was every bit a weapon designed to destroy life and health. A 2018 Government Accountability Office report found that over 757,000 veterans — about one in four who served — were receiving benefits linked to Agent Orange. The 2022 PACT Act broadened that circle to veterans who served in other areas where Agent Orange was used. By 2024, more than 84,000 new Vietnam-era veterans were granted compensation, many due to exposure. Fifty years after the Vietnam War ended, the toxic legacy of Agent Orange and other dioxins lingers on.

Note: For more along these lines, read our concise summaries of news articles on military corruption and toxic chemicals.

Future wars just might revolve around insect-size spy robots. A recent digest of present-day microbots by US national security magazine The National Interest breaks down the many machines currently in development by the US military and its associates. They include sea-based microdrones, cockroach-style surveillance bots, and even cyborg insects. Arguably the most refined program to date is the RoboBee, currently being shopped by Harvard’s Wyss Institute. Originally funded by a $9.3 million grant from the National Science Foundation in 2009, the RoboBee is a bug-sized autonomous flying vehicle capable of transitioning from water to air, perching on surfaces, and autonomous collision avoidance in swarms. The RoboBee features two “wafer-thin” wings that flap some 120 times a second to achieve vertical takeoff and mid-air hovering. The US Defense Advanced Research Projects Agency (DARPA) has reportedly taken a keen interest in RoboBee prototypes, sponsoring research into microfabrication technology, presumably for quick field deployments. Other developments, like the aforementioned cyborg insect, remain in early stages. Researchers have successfully demonstrated the capabilities of these remote-control systems using of a range of insect hosts, from the unicorn beetle to the humble cockroach. Underwater microrobotics are another area of interest for DARPA.

Note: Explore all news article summaries on emerging warfare technology in our comprehensive news database.

AI could mean fewer body bags on the battlefield — but that's exactly what terrifies the godfather of AI. Geoffrey Hinton, the computer scientist known as the "godfather of AI," said the rise of killer robots won't make wars safer. It will make conflicts easier to start by lowering the human and political cost of fighting. Hinton said ... that "lethal autonomous weapons, that is weapons that decide by themselves who to kill or maim, are a big advantage if a rich country wants to invade a poor country." "The thing that stops rich countries invading poor countries is their citizens coming back in body bags," he said. "If you have lethal autonomous weapons, instead of dead people coming back, you'll get dead robots coming back." That shift could embolden governments to start wars — and enrich defense contractors in the process, he said. Hinton also said AI is already reshaping the battlefield. "It's fairly clear it's already transformed warfare," he said, pointing to Ukraine as an example. "A $500 drone can now destroy a multimillion-dollar tank." Traditional hardware is beginning to look outdated, he added. "Fighter jets with people in them are a silly idea now," Hinton said. "If you can have AI in them, AIs can withstand much bigger accelerations — and you don't have to worry so much about loss of life." One Ukrainian soldier who works with drones and uncrewed systems [said] in a February report that "what we're doing in Ukraine will define warfare for the next decade."

Note: As law expert Dr. Salah Sharief put it, "The detached nature of drone warfare has anonymized and dehumanized the enemy, greatly diminishing the necessary psychological barriers of killing." For more, read our concise summaries of news articles on AI and warfare technology.

“Ice is just around the corner,” my friend said, looking up from his phone. A day earlier, I had met with foreign correspondents at the United Nations to explain the AI surveillance architecture that Immigration and Customs Enforcement (Ice) is using across the United States. The law enforcement agency uses targeting technologies which one of my past employers, Palantir Technologies, has both pioneered and proliferated. Technology like Palantir’s plays a major role in world events, from wars in Iran, Gaza and Ukraine to the detainment of immigrants and dissident students in the United States. Known as intelligence, surveillance, target acquisition and reconnaissance (Istar) systems, these tools, built by several companies, allow users to track, detain and, in the context of war, kill people at scale with the help of AI. They deliver targets to operators by combining immense amounts of publicly and privately sourced data to detect patterns, and are particularly helpful in projects of mass surveillance, forced migration and urban warfare. Also known as “AI kill chains”, they pull us all into a web of invisible tracking mechanisms that we are just beginning to comprehend, yet are starting to experience viscerally in the US as Ice wields these systems near our homes, churches, parks and schools. The dragnets powered by Istar technology trap more than migrants and combatants ... in their wake. They appear to violate first and fourth amendment rights.

Note: Read how Palantir helped the NSA and its allies spy on the entire planet. Learn more about emerging warfare technology in our comprehensive Military-Intelligence Corruption Information Center. For more, read our concise summaries of news articles on AI and Big Tech.

Local cops have gotten tens of millions of dollars’ worth of discounted military gear under a secretive federal program that is poised to grow under recent executive action. The 1122 program ... presents a danger to people facing off against militarized cops, according to Women for Weapons Trade Transparency. “All of these things combined serve as a threat to free speech, an intimidation tactic to protest,” said Lillian Mauldin, the co-founder of the nonprofit group, which produced the report released this week. The federal government’s 1033 program ... has long sent surplus gear like mine-resistant vehicles and bayonets to local police. Since 1994, however, the even more obscure 1122 program has allowed local cops to purchase everything from uniforms to riot shields at federal government rates. The program turns the feds into purchasing agents for local police. Local cops have used the program to pick up 16 Lenco BearCats, fearsome-looking armored police vehicles. Those vehicles represented 4.8 percent of the total spending identified in the ... report. Surveillance gear and software represented another 6.4 percent, and weapons or riot gear represented 5 percent. One agency bought a $428,000 Star Safire thermal imaging system, the kind used in military helicopters. The Texas Department of Public Safety’s intelligence and counterterrorism unit purchased a $1.5 million surveillance software license. Another agency bought an $89,000 covert camera system.

Note: Read more about the Pentagon's 1033 program. For more along these lines, read our concise summaries of news articles on police corruption and the erosion of civil liberties.

Department of Defense spending is increasingly going to large tech companies including Microsoft, Google parent company Alphabet, Oracle, and IBM. Open AI recently brought on former U.S. Army general and National Security Agency Director Paul M. Nakasone to its Board of Directors. The U.S. military discreetly, yet frequently, collaborated with prominent tech companies through thousands of subcontractors through much of the 2010s, obfuscating the extent of the two sectors’ partnership from tech employees and the public alike. The long-term, deep-rooted relationship between the institutions, spurred by massive Cold War defense and research spending and bound ever tighter by the sectors’ revolving door, ensures that advances in the commercial tech sector benefit the defense industry’s bottom line. Military, tech spending has manifested myriad landmark inventions. The internet, for example, began as an Advanced Research Projects Agency (ARPA, now known as Defense Advanced Research Projects Agency, or DARPA) research project called ARPANET, the first network of computers. Decades later, graduate students Sergey Brin and Larry Page received funding from DARPA, the National Science Foundation, and U.S. intelligence community-launched development program Massive Digital Data Systems to create what would become Google. Other prominent DARPA-funded inventions include transit satellites, a precursor to GPS, and the iPhone Siri app, which, instead of being picked up by the military, was ultimately adapted to consumer ends by Apple.

Note: Watch our latest video on the militarization of Big Tech. For more, read our concise summaries of news articles on AI, warfare technology, and Big Tech.

The US military may soon have an army of faceless suicide bombers at their disposal, as an American defense contractor has revealed their newest war-fighting drone. AeroVironment unveiled the Red Dragon in a video on their YouTube page, the first in a new line of 'one-way attack drones.' This new suicide drone can reach speeds up to 100 mph and can travel nearly 250 miles. The new drone takes just 10 minutes to set up and launch and weighs just 45 pounds. Once the small tripod the Red Dragon takes off from is set up, AeroVironment said soldiers would be able to launch up to five per minute. Since the suicide robot can choose its own target in the air, the US military may soon be taking life-and-death decisions out of the hands of humans. Once airborne, its AVACORE software architecture functions as the drone's brain, managing all its systems and enabling quick customization. Red Dragon's SPOTR-Edge perception system acts like smart eyes, using AI to find and identify targets independently. Simply put, the US military will soon have swarms of bombs with brains that don't land until they've chosen a target and crash into it. Despite Red Dragon's ability to choose a target with 'limited operator involvement,' the Department of Defense (DoD) has said it's against the military's policy to allow such a thing to happen. The DoD updated its own directives to mandate that 'autonomous and semi-autonomous weapon systems' always have the built-in ability to allow humans to control the device.

Note: Drones create more terrorists than they kill. For more, read our concise summaries of news articles on warfare technology and Big Tech.

In 2003 [Alexander Karp] – together with Peter Thiel and three others – founded a secretive tech company called Palantir. And some of the initial funding came from the investment arm of – wait for it – the CIA! The lesson that Karp and his co-author draw [in their book The Technological Republic: Hard Power, Soft Belief and the Future of the West] is that “a more intimate collaboration between the state and the technology sector, and a closer alignment of vision between the two, will be required if the United States and its allies are to maintain an advantage that will constrain our adversaries over the longer term. The preconditions for a durable peace often come only from a credible threat of war.” Or, to put it more dramatically, maybe the arrival of AI makes this our “Oppenheimer moment”. For those of us who have for decades been critical of tech companies, and who thought that the future for liberal democracy required that they be brought under democratic control, it’s an unsettling moment. If the AI technology that giant corporations largely own and control becomes an essential part of the national security apparatus, what happens to our concerns about fairness, diversity, equity and justice as these technologies are also deployed in “civilian” life? For some campaigners and critics, the reconceptualisation of AI as essential technology for national security will seem like an unmitigated disaster – Big Brother on steroids, with resistance being futile, if not criminal.

Note: Learn more about emerging warfare technology in our comprehensive Military-Intelligence Corruption Information Center. For more, read our concise summaries of news articles on AI and intelligence agency corruption.

Before signing its lucrative and controversial Project Nimbus deal with Israel, Google knew it couldn’t control what the nation and its military would do with the powerful cloud-computing technology, a confidential internal report obtained by The Intercept reveals. The report makes explicit the extent to which the tech giant understood the risk of providing state-of-the-art cloud and machine learning tools to a nation long accused of systemic human rights violations. Not only would Google be unable to fully monitor or prevent Israel from using its software to harm Palestinians, but the report also notes that the contract could obligate Google to stonewall criminal investigations by other nations into Israel’s use of its technology. And it would require close collaboration with the Israeli security establishment — including joint drills and intelligence sharing — that was unprecedented in Google’s deals with other nations. The rarely discussed question of legal culpability has grown in significance as Israel enters the third year of what has widely been acknowledged as a genocide in Gaza — with shareholders pressing the company to conduct due diligence on whether its technology contributes to human rights abuses. Google doesn’t furnish weapons to the military, but it provides computing services that allow the military to function — its ultimate function being, of course, the lethal use of those weapons. Under international law, only countries, not corporations, have binding human rights obligations.

Note: For more along these lines, read our concise summaries of news articles on AI and government corruption.

2,500 US service members from the 15th Marine Expeditionary Unit [tested] a leading AI tool the Pentagon has been funding. The generative AI tools they used were built by the defense-tech company Vannevar Labs, which in November was granted a production contract worth up to $99 million by the Pentagon’s startup-oriented Defense Innovation Unit. The company, founded in 2019 by veterans of the CIA and US intelligence community, joins the likes of Palantir, Anduril, and Scale AI as a major beneficiary of the US military’s embrace of artificial intelligence. In December, the Pentagon said it will spend $100 million in the next two years on pilots specifically for generative AI applications. In addition to Vannevar, it’s also turning to Microsoft and Palantir, which are working together on AI models that would make use of classified data. People outside the Pentagon are warning about the potential risks of this plan, including Heidy Khlaaf ... at the AI Now Institute. She says this rush to incorporate generative AI into military decision-making ignores more foundational flaws of the technology: “We’re already aware of how LLMs are highly inaccurate, especially in the context of safety-critical applications that require precision.” Khlaaf adds that even if humans are “double-checking” the work of AI, there's little reason to think they're capable of catching every mistake. “‘Human-in-the-loop’ is not always a meaningful mitigation,” she says.

Note: For more, read our concise summaries of news articles on warfare technology and Big Tech.

Alexander Balan was on a California beach when the idea for a new kind of drone came to him. This eureka moment led Balan to found Xdown, the company that’s building the P.S. Killer (PSK)—an autonomous kamikaze drone that works like a hand grenade and can be thrown like a football. The PSK is a “throw-and-forget” drone, Balan says, referencing the “fire-and-forget” missile that, once locked on to a target, can seek it on its own. Instead of depending on remote controls, the PSK will be operated by AI. Soldiers should be able to grab it, switch it on, and throw it—just like a football. The PSK can carry one or two 40 mm grenades commonly used in grenade launchers today. The grenades could be high-explosive dual purpose, designed to penetrate armor while also creating an explosive fragmentation effect against personnel. These grenades can also “airburst”—programmed to explode in the air above a target for maximum effect. Infantry, special operations, and counterterrorism units can easily store PSK drones in a field backpack and tote them around, taking one out to throw at any given time. They can also be packed by the dozen in cargo airplanes, which can fly over an area and drop swarms of them. Balan says that one Defense Department official told him “This is the most American munition I have ever seen.” The nonlethal version of the PSK [replaces] its warhead with a supply container so that it’s able to “deliver food, medical kits, or ammunition to frontline troops” (though given the 1.7-pound payload capacity, such packages would obviously be small).

Note: The US military is using Xbox controllers to operate weapons systems. The latest US Air Force recruitment tool is a video game that allows players to receive in-game medals and achievements for drone bombing Iraqis and Afghans. For more, read our concise summaries of news articles on warfare technologies and watch our latest video on the militarization of Big Tech.

Last April, in a move generating scant media attention, the Air Force announced that it had chosen two little-known drone manufacturers—Anduril Industries of Costa Mesa, California, and General Atomics of San Diego—to build prototype versions of its proposed Collaborative Combat Aircraft (CCA), a future unmanned plane intended to accompany piloted aircraft on high-risk combat missions. The Air Force expects to acquire at least 1,000 CCAs over the coming decade at around $30 million each, making this one of the Pentagon’s costliest new projects. In winning the CCA contract, Anduril and General Atomics beat out three of the country’s largest and most powerful defense contractors ... posing a severe threat to the continued dominance of the existing military-industrial complex, or MIC. The very notion of a “military-industrial complex” linking giant defense contractors to powerful figures in Congress and the military was introduced on January 17, 1961, by President Dwight D. Eisenhower in his farewell address. In 2024, just five companies—Lockheed Martin (with $64.7 billion in defense revenues), RTX (formerly Raytheon, with $40.6 billion), Northrop Grumman ($35.2 billion), General Dynamics ($33.7 billion), and Boeing ($32.7 billion)—claimed the vast bulk of Pentagon contracts. Now ... a new force—Silicon Valley startup culture—has entered the fray, and the military-industrial complex equation is suddenly changing dramatically.

Note: For more, read our concise summaries of news articles on warfare technologies and watch our latest video on the militarization of Big Tech.

In the Air Force, drone pilots did not pick the targets. That was the job of someone pilots called “the customer.” The customer might be a conventional ground force commander, the C.I.A. or a classified Special Operations strike cell. [Drone operator] Captain Larson described a mission in which the customer told him to track and kill a suspected Al Qaeda member. Then, the customer told him to use the Reaper’s high-definition camera to follow the man’s body to the cemetery and kill everyone who attended the funeral. In December 2016, the Obama administration loosened the rules. Strikes once carried out only after rigorous intelligence-gathering and approval processes were often ordered up on the fly, hitting schools, markets and large groups of women and children. Before the rules changed, [former Air Force captain James] Klein said, his squadron launched about 16 airstrikes in two years. Afterward, it conducted them almost daily. Once, Mr. Klein said, the customer pressed him to fire on two men walking by a river in Syria, saying they were carrying weapons over their shoulders. The weapons turned out to be fishing poles. Over time, Mr. Klein grew angry and depressed. Eventually, he refused to fire any more missiles. In 2020, he retired, one of many disillusioned drone operators who quietly dropped out. “We were so isolated," he said. “The biggest tell is that very few people stayed in the field. They just couldn’t take it.” Bennett Miller was an intelligence analyst, trained to study the Reaper’s video feed. In late 2019 ... his team tracked a man in Afghanistan who the customer said was a high-level Taliban financier. For a week, the crew watched the man feed his animals, eat with family in his courtyard. Then the customer ordered the crew to kill him. A week later, the Taliban financier’s name appeared again on the target list. “We got the wrong guy. I had just killed someone’s dad,” Mr. Miller said. “I had watched his kids pick up the body parts.” In February 2020, he ... was hospitalized, diagnosed with PTSD and medically retired. Veterans with combat-related injuries, even injuries suffered in training, get special compensation worth about $1,000 per month. Mr. Miller does not qualify, because the Department of Veterans Affairs does not consider drone missions combat. “It’s like they are saying all the people we killed somehow don’t really count,” he said. “And neither do we.”

Note: Captain Larson took his own life in 2020. Furthermore, drones create more terrorists than they kill. Read about former drone operator Brandon Bryant's emotional experience of killing a child in Afghanistan that his superiors told him was a dog. For more along these lines, explore concise summaries of revealing news articles on war.

The Defense Advanced Research Project Agency, the Pentagon's top research arm, wants to find out if red blood cells could be modified in novel ways to protect troops. The DARPA program, called the Red Blood Cell Factory, is looking for researchers to study the insertion of "biologically active components" or "cargoes" in red blood cells. The hope is that modified cells would enhance certain biological systems, "thus allowing recipients, such as warfighters, to operate more effectively in dangerous or extreme environments." Red blood cells could act like a truck, carrying "cargo" or special protections, to all parts of the body, since they already circulate oxygen everywhere, [said] Christopher Bettinger, a professor of biomedical engineering overseeing the program. "What if we could add in additional cargo ... inside of that disc," Bettinger said, referring to the shape of red blood cells, "that could then confer these interesting benefits?" The research could impact the way troops battle diseases that reproduce in red blood cells, such as malaria, Bettinger hypothesized. "Imagine an alternative world where we have a warfighter that has a red blood cell that's accessorized with a compound that can sort of defeat malaria," Bettinger said. In 2019, the Army released a report called "Cyborg Soldier 2050," which laid out a vision of the future where troops would benefit from neural and optical enhancements, though the report acknowledged ethical and legal concerns.

Note: Read about the Pentagon's plans to use our brains as warfare, describing how the human body is war's next domain. Learn more about biotech dangers.

Militaries, law enforcement, and more around the world are increasingly turning to robot dogs — which, if we're being honest, look like something straight out of a science-fiction nightmare — for a variety of missions ranging from security patrol to combat. Robot dogs first really came on the scene in the early 2000s with Boston Dynamics' "BigDog" design. They have been used in both military and security activities. In November, for instance, it was reported that robot dogs had been added to President-elect Donald Trump's security detail and were on patrol at his home in Mar-a-Lago. Some of the remote-controlled canines are equipped with sensor systems, while others have been equipped with rifles and other weapons. One Ohio company made one with a flamethrower. Some of these designs not only look eerily similar to real dogs but also act like them, which can be unsettling. In the Ukraine war, robot dogs have seen use on the battlefield, the first known combat deployment of these machines. Built by British company Robot Alliance, the systems aren't autonomous, instead being operated by remote control. They are capable of doing many of the things other drones in Ukraine have done, including reconnaissance and attacking unsuspecting troops. The dogs have also been useful for scouting out the insides of buildings and trenches, particularly smaller areas where operators have trouble flying an aerial drone.

Note: Learn more about the troubling partnership between Big Tech and the military. For more, read our concise summaries of news articles on military corruption.

It is often said that autonomous weapons could help minimize the needless horrors of war. Their vision algorithms could be better than humans at distinguishing a schoolhouse from a weapons depot. Some ethicists have long argued that robots could even be hardwired to follow the laws of war with mathematical consistency. And yet for machines to translate these virtues into the effective protection of civilians in war zones, they must also possess a key ability: They need to be able to say no. Human control sits at the heart of governments’ pitch for responsible military AI. Giving machines the power to refuse orders would cut against that principle. Meanwhile, the same shortcomings that hinder AI’s capacity to faithfully execute a human’s orders could cause them to err when rejecting an order. Militaries will therefore need to either demonstrate that it’s possible to build ethical, responsible autonomous weapons that don’t say no, or show that they can engineer a safe and reliable right-to-refuse that’s compatible with the principle of always keeping a human “in the loop.” If they can’t do one or the other ... their promises of ethical and yet controllable killer robots should be treated with caution. The killer robots that countries are likely to use will only ever be as ethical as their imperfect human commanders. They would only promise a cleaner mode of warfare if those using them seek to hold themselves to a higher standard.

Note: Learn more about emerging warfare technology in our comprehensive Military-Intelligence Corruption Information Center. For more, read our concise summaries of news articles on AI and military corruption.

Mitigating the risk of extinction from AI should be a global priority. However, as many AI ethicists warn, this blinkered focus on the existential future threat to humanity posed by a malevolent AI ... has often served to obfuscate the myriad more immediate dangers posed by emerging AI technologies. These “lesser-order” AI risks ... include pervasive regimes of omnipresent AI surveillance and panopticon-like biometric disciplinary control; the algorithmic replication of existing racial, gender, and other systemic biases at scale ... and mass deskilling waves that upend job markets, ushering in an age monopolized by a handful of techno-oligarchs. Killer robots have become a twenty-first-century reality, from gun-toting robotic dogs to swarms of autonomous unmanned drones, changing the face of warfare from Ukraine to Gaza. Palestinian civilians have frequently spoken about the paralyzing psychological trauma of hearing the “zanzana” — the ominous, incessant, unsettling, high-pitched buzzing of drones loitering above. Over a decade ago, children in Waziristan, a region of Pakistan’s tribal belt bordering Afghanistan, experienced a similar debilitating dread of US Predator drones that manifested as a fear of blue skies. “I no longer love blue skies. In fact, I now prefer gray skies. The drones do not fly when the skies are gray,” stated thirteen-year-old Zubair in his testimony before Congress in 2013.

Note: For more along these lines, read our concise summaries of news articles on AI and military corruption.

The Pentagon is turning to a new class of weapons to fight the numerically superior [China's] People’s Liberation Army: drones, lots and lots of drones. In August 2023, the Defense Department unveiled Replicator, its initiative to field thousands of “all-domain, attritable autonomous (ADA2) systems”: Pentagon-speak for low-cost (and potentially AI-driven) machines — in the form of self-piloting ships, large robot aircraft, and swarms of smaller kamikaze drones — that they can use and lose en masse to overwhelm Chinese forces. For the last 25 years, uncrewed Predators and Reapers, piloted by military personnel on the ground, have been killing civilians across the planet. Experts worry that mass production of new low-cost, deadly drones will lead to even more civilian casualties. Advances in AI have increasingly raised the possibility of robot planes, in various nations’ arsenals, selecting their own targets. During the first 20 years of the war on terror, the U.S. conducted more than 91,000 airstrikes ... and killed up to 48,308 civilians, according to a 2021 analysis. “The Pentagon has yet to come up with a reliable way to account for past civilian harm caused by U.S. military operations,” [Columbia Law’s Priyanka Motaparthy] said. “So the question becomes, ‘With the potential rapid increase in the use of drones, what safeguards potentially fall by the wayside? How can they possibly hope to reckon with future civilian harm when the scale becomes so much larger?’”

Note: Learn more about emerging warfare technology in our comprehensive Military-Intelligence Corruption Information Center. For more, read our concise summaries of news articles on military corruption.

At the Technology Readiness Experimentation (T-REX) event in August, the US Defense Department tested an artificial intelligence-enabled autonomous robotic gun system developed by fledgling defense contractor Allen Control Systems dubbed the “Bullfrog.” Consisting of a 7.62-mm M240 machine gun mounted on a specially designed rotating turret outfitted with an electro-optical sensor, proprietary AI, and computer vision software, the Bullfrog was designed to deliver small arms fire on drone targets with far more precision than the average US service member can achieve with a standard-issue weapon. Footage of the Bullfrog in action published by ACS shows the truck-mounted system locking onto small drones and knocking them out of the sky with just a few shots. Should the Pentagon adopt the system, it would represent the first publicly known lethal autonomous weapon in the US military’s arsenal. In accordance with the Pentagon’s current policy governing lethal autonomous weapons, the Bullfrog is designed to keep a human “in the loop” in order to avoid a potential “unauthorized engagement." In other words, the gun points at and follows targets, but does not fire until commanded to by a human operator. However, ACS officials claim that the system can operate totally autonomously should the US military require it to in the future, with sentry guns taking the entire kill chain out of the hands of service members.

Note: Learn more about emerging warfare technology in our comprehensive Military-Intelligence Corruption Information Center. For more, see concise summaries of deeply revealing news articles on AI from reliable major media sources.

On the sidelines of the International Institute for Strategic Studies’ annual Shangri-La Dialogue in June, US Indo-Pacific Command chief Navy Admiral Samuel Paparo colorfully described the US military’s contingency plan for a Chinese invasion of Taiwan as flooding the narrow Taiwan Strait between the two countries with swarms of thousands upon thousands of drones, by land, sea, and air, to delay a Chinese attack enough for the US and its allies to muster additional military assets. “I want to turn the Taiwan Strait into an unmanned hellscape using a number of classified capabilities,” Paparo said, “so that I can make their lives utterly miserable for a month, which buys me the time for the rest of everything.” China has a lot of drones and can make a lot more drones quickly, creating a likely advantage during a protracted conflict. This stands in contrast to American and Taiwanese forces, who do not have large inventories of drones. The Pentagon’s “hellscape” plan proposes that the US military make up for this growing gap by producing and deploying what amounts to a massive screen of autonomous drone swarms designed to confound enemy aircraft, provide guidance and targeting to allied missiles, knock out surface warships and landing craft, and generally create enough chaos to blunt (if not fully halt) a Chinese push across the Taiwan Strait. Planning a “hellscape" of hundreds of thousands of drones is one thing, but actually making it a reality is another.

Note: Learn more about warfare technology in our comprehensive Military-Intelligence Corruption Information Center. For more along these lines, see concise summaries of deeply revealing news articles on military corruption from reliable major media sources.

Razish [is] a fake village built by the US army to train its soldiers for urban warfare. It is one of a dozen pretend settlements scattered across “the Box” (as in sandbox) – a vast landscape of unforgiving desert at the Fort Irwin National Training Center (NTC), the largest such training facility in the world. Covering more than 1,200 square miles, it is a place where soldiers come to practise liberating the citizens of the imaginary oil-rich nation Atropia from occupation by the evil authoritarian state of Donovia. Fake landmines dot the valleys, fake police stations are staffed by fake police, and fake villages populated by citizens of fake nation states are invaded daily by the US military – wielding very real artillery. It operates a fake cable news channel, on which officers are subjected to aggressive TV interviews, trained to win the media war as well as the physical one. Recently, it even introduced internal social media networks, called Tweeter and Fakebook, where mock civilians spread fake news about the battles – social media being the latest weapon in the arsenal of modern war. Razish may still have a Middle Eastern look, but the actors hawking chunks of plastic meat and veg in the street market speak not English or Arabic, but Russian. This military role-playing industry has ballooned since the early 2000s, now comprising a network of 256 companies across the US, receiving more than $250m a year in government contracts. The actors are often recent refugees, having fled one real-world conflict only to enter another, simulated one.

Note: For more along these lines, see concise summaries of deeply revealing news articles on military corruption from reliable major media sources.

Billionaire Elon Musk’s brain-computer interface (BCI) company Neuralink made headlines earlier this year for inserting its first brain implant into a human being. Such implants ... are described as “fully implantable, cosmetically invisible, and designed to let you control a computer or mobile device anywhere you go." They can help people regain abilities lost due to aging, ailments, accidents or injuries, thus improving quality of life. Yet, great ethical concerns arise with such advancements, and the tech is already being used for questionable purposes. Some Chinese employers have started using “emotional surveillance technology” to monitor workers’ brainwaves. Governments and militaries are already ... describing the human body and brain as war’s next domain. On this new “battlefield,” an era of neuroweapons ... has begun. The Pentagon’s research arm DARPA directly or indirectly funds about half of invasive neural interface technology companies in the US. DARPA has initiated at least 40 neurotechnology-related programs over the past 24 years. As a 2024 RAND report speculates, if BCI technologies are hacked or compromised, “a malicious adversary could potentially inject fear, confusion, or anger into [a BCI] commander’s brain and cause them to make decisions that result in serious harm.” Academic Nicholas Evans speculates, further, that neuroimplants could “control an individual’s mental functions,” perhaps to manipulate memories, emotions, or even to torture the wearer. In a [military research paper] on neurowarfare: "Microbiologists have recently discovered mind-controlling parasites that can manipulate the behavior of their hosts according to their needs by switching genes on or off. Since human behavior is at least partially influenced by their genetics, nonlethal behavior modifying genetic bioweapons that spread through a highly contagious virus could thus be, in principle, possible.

Note: The CIA once used brain surgery to make six remote controlled dogs. For more, see important information on microchip implants and CIA mind control programs from reliable major media sources.

The Palestinian population is intimately familiar with how new technological innovations are first weaponized against them–ranging from electric fences and unmanned drones to trap people in Gaza—to the facial recognition software monitoring Palestinians in the West Bank. Groups like Amnesty International have called Israel an Automated Apartheid and repeatedly highlight stories, testimonies, and reports about cyber-intelligence firms, including the infamous NSO Group (the Israeli surveillance company behind the Pegasus software) conducting field tests and experiments on Palestinians. Reports have highlighted: “Testing and deployment of AI surveillance and predictive policing systems in Palestinian territories. In the occupied West Bank, Israel increasingly utilizes facial recognition technology to monitor and regulate the movement of Palestinians. Israeli military leaders described AI as a significant force multiplier, allowing the IDF to use autonomous robotic drone swarms to gather surveillance data, identify targets, and streamline wartime logistics.” The Palestinian towns and villages near Israeli settlements have been described as laboratories for security solutions companies to experiment their technologies on Palestinians before marketing them to places like Colombia. The Israeli government hopes to crystalize its “automated apartheid” through the tokenization and privatization of various industries and establishing a technocratic government in Gaza.

Note: For more along these lines, see concise summaries of deeply revealing news articles on government corruption and the disappearance of privacy from reliable major media sources.

In 2023, this country’s drone warfare program has entered its third decade with no end in sight. Despite the fact that the 22nd anniversary of 9/11 is approaching, policymakers have demonstrated no evidence of reflecting on the failures of drone warfare and how to stop it. Instead, the focus continues to be on simply shifting drone policy in minor ways within an ongoing violent system. Washington’s war on terror has inflicted disproportionate violence on communities across the globe, while using this form of asymmetrical warfare to further expand the space between the value placed on American lives and those of Muslims. Since the war on terror was launched, the London-based watchdog group Airwars has estimated that American air strikes have killed at least 22,679 civilians and possibly up to 48,308 of them. Such killings have been carried out for the most part by desensitized killers, who have been primed towards the dehumanization of the targets of those murderous machines. In the words of critic Saleh Sharief, “The detached nature of drone warfare has anonymized and dehumanized the enemy, greatly diminishing the necessary psychological barriers of killing.” While the use of drones in the war on terror began under President George W. Bush, it escalated dramatically under Obama. Then, in the Trump years, it rose yet again. Though the use of drones in Joe Biden’s first year in office was lower than Trump’s, what has remained consistent is the lack of ... accountability for the slaughter of civilians.

Note: A 2014 analysis found that attempts to kill 41 people with drones resulted in 1,147 deaths. For more along these lines, see concise summaries of deeply revealing news articles on military corruption from reliable major media sources.

Though once confined to the realm of science fiction, the concept of supercomputers killing humans has now become a distinct possibility. In addition to developing a wide variety of "autonomous," or robotic combat devices, the major military powers are also rushing to create automated battlefield decision-making systems, or what might be called "robot generals." In wars in the not-too-distant future, such AI-powered systems could be deployed to deliver combat orders to American soldiers, dictating where, when, and how they kill enemy troops or take fire from their opponents. In its budget submission for 2023, for example, the Air Force requested $231 million to develop the Advanced Battlefield Management System (ABMS), a complex network of sensors and AI-enabled computers designed to ... provide pilots and ground forces with a menu of optimal attack options. As the technology advances, the system will be capable of sending "fire" instructions directly to "shooters," largely bypassing human control. The Air Force's ABMS is intended to ... connect all US combat forces, the Joint All-Domain Command-and-Control System (JADC2, pronounced "Jad-C-two"). "JADC2 intends to enable commanders to make better decisions by collecting data from numerous sensors, processing the data using artificial intelligence algorithms to identify targets, then recommending the optimal weapon ... to engage the target," the Congressional Research Service reported in 2022.

Note: Read about the emerging threat of killer robots on the battlefield. For more along these lines, see concise summaries of deeply revealing news articles on military corruption from reliable major media sources.

Weapons-grade robots and drones being utilized in combat isn't new. But AI software is, and it's enhancing – in some cases, to the extreme – the existing hardware, which has been modernizing warfare for the better part of a decade. Now, experts say, developments in AI have pushed us to a point where global forces now have no choice but to rethink military strategy – from the ground up. "It's realistic to expect that AI will be piloting an F-16 and will not be that far out," Nathan Michael, Chief Technology Officer of Shield AI, a company whose mission is "building the world's best AI pilot," says. We don't truly comprehend what we're creating. There are also fears that a comfortable reliance in the technology's precision and accuracy – referred to as automation bias – may come back to haunt, should the tech fail in a life or death situation. One major worry revolves around AI facial recognition software being used to enhance an autonomous robot or drone during a firefight. Right now, a human being behind the controls has to pull the proverbial trigger. Should that be taken away, militants could be misconstrued for civilians or allies at the hands of a machine. And remember when the fear of our most powerful weapons being turned against us was just something you saw in futuristic action movies? With AI, that's very possible. "There is a concern over cybersecurity in AI and the ability of either foreign governments or an independent actors to take over crucial elements of the military," [filmmaker Jesse Sweet] said.

Note: For more along these lines, see concise summaries of deeply revealing news articles on military corruption from reliable major media sources.

Within ten days [of its release], the first-person military shooter video game [Call of Duty: Modern Warfare II] earned more than $1 billion in revenue. The Call of Duty franchise is an entertainment juggernaut, having sold close to half a billion games since it was launched in 2003. Its publisher, Activision Blizzard, is a giant in the industry. Details gleaned from documents obtained under the Freedom of Information Act reveal that Call of Duty is not a neutral first-person shooter, but a carefully constructed piece of military propaganda, designed to advance the interests of the U.S. national security state. Not only does Activision Blizzard work with the U.S. military to shape its products, but its leadership board is also full of former high state officials. Chief amongst these is Frances Townsend, Activision Blizzard's senior counsel. As the White House's most senior advisor on terrorism and homeland security, Townsend ... became one of the faces of the administration's War on Terror. Activision Blizzard's chief administration officer, Brian Bulatao ... was chief operating officer for the CIA, placing him third in command of the agency. Bulatao went straight from the State Department into the highest echelons of Activision Blizzard, despite no experience in the entertainment industry. [This] raises serious questions around privacy and state control over media. "Call of Duty ... has been flagged up for recreating real events as game missions and manipulating them for geopolitical purposes," [journalist Tom] Secker told MintPress.

Note: The latest US Air Force recruitment tool is a video game that allows players to receive in-game medals and achievements for drone bombing Iraqis and Afghans. For more on this disturbing "military-entertainment complex" trend, explore the work of investigative journalist Tom Secker, who recently produced a documentary, Theaters of War: How the Pentagon and CIA Took Hollywood, and published a new book, Superheroes, Movies and the State: How the U.S. Government Shapes Cinematic Universes.

Last week, an Israeli defense company painted a frightening picture. In a roughly two-minute video on YouTube that resembles an action movie, soldiers out on a mission are suddenly pinned down by enemy gunfire and calling for help. In response, a tiny drone zips off its mother ship to the rescue, zooming behind the enemy soldiers and killing them with ease. While the situation is fake, the drone — unveiled last week by Israel-based Elbit Systems — is not. The Lanius, which in Latin can refer to butcherbirds, represents a new generation of drone: nimble, wired with artificial intelligence, and able to scout and kill. The machine is based on racing drone design, allowing it to maneuver into tight spaces, such as alleyways and small buildings. After being sent into battle, Lanius’s algorithm can make a map of the scene and scan people, differentiating enemies from allies — feeding all that data back to soldiers who can then simply push a button to attack or kill whom they want. For weapons critics, that represents a nightmare scenario, which could alter the dynamics of war. “It’s extremely concerning,” said Catherine Connolly, an arms expert at Stop Killer Robots, an anti-weapons advocacy group. “It’s basically just allowing the machine to decide if you live or die if we remove the human control element for that.” According to the drone’s data sheet, the drone is palm-size, roughly 11 inches by 6 inches. It has a top speed of 45 miles per hour. It can fly for about seven minutes, and has the ability to carry lethal and nonlethal materials.

Note: US General Paul Selva has warned against employing killer robots in warfare for ethical reasons. For more along these lines, see concise summaries of deeply revealing news articles on military corruption from reliable major media sources.

Looking Glass Factory, a company based in the Greenpoint neighborhood of Brooklyn, New York, revealed its latest consumer device: a slim, holographic picture frame that turns photos taken on iPhones into 3D displays. Looking Glass received $2.54 million of “technology development” funding from In-Q-Tel, the venture capital arm of the CIA, from April 2020 to March 2021 and a $50,000 Small Business Innovation Research award from the U.S. Air Force in November 2021 to “revolutionize 3D/virtual reality visualization.” Across the various branches of the military and intelligence community, contract records show a rush to jump on holographic display technology, augmented reality, and virtual reality display systems as the latest trend. Critics argue that the technology isn’t quite ready for prime time, and that the urgency to adopt it reflects the Pentagon’s penchant for high-priced, high-tech contracts based on the latest fad in warfighting. Military interest in holographic imaging, in particular, has grown rapidly in recent years. Military planners in China and the U.S. have touted holographic technology to project images “to incite fear in soldiers on a battlefield.” Other uses involve the creation of three-dimensional maps of villages of specific buildings and to analyze blast forensics. Palmer Luckey, who founded the technology startup Anduril Industries ... has received secretive Air Force contracts to develop next-generation artificial intelligence capabilities under the so-called Project Maven initiative.

Note: For more along these lines, see concise summaries of deeply revealing news articles on intelligence agency corruption from reliable major media sources.

According to the nonprofit organization Airwars, the U.S. has conducted more than 91,000 airstrikes in seven major conflict zones since 2001, with at least 22,000 civilians killed and potentially as many as 48,000. How does America react when it kills civilians? Just last week, we learned that the U.S. military decided that nobody will be held responsible for the August 29 drone attack in Kabul, Afghanistan, that killed 10 members of an Afghan family, including seven children. After an internal review, Secretary of Defense Lloyd Austin chose to take no action, not even a wrist slap for a single intelligence analyst, drone operator, mission commander, or general. U.S. bombings since 2014 have consistently killed civilians but ... the Pentagon has done almost nothing to discern how many were harmed or what went wrong and might be corrected. Savagery consists of more than the act of killing. It also involves a system of impunity that makes clear to the perpetrators that what they are doing is acceptable, necessary — maybe even heroic — and must not cease. To this end, the United States has developed a machinery of impunity that is arguably the most advanced in the world, implicating not only a broad swathe of military personnel but also the entirety of American society. The machinery of impunity actually has two missions: The most obvious is to excuse people who should not be excused. The other is to punish those who try to expose the machine, because it does not function well in daylight.

Note: For more along these lines, see concise summaries of deeply revealing news articles on military corruption from reliable major media sources.

The terrorist attack on the airport in Kabul, Afghanistan’s capital ... killed more than 170 Afghan civilians and 13 U.S. soldiers. Three days later, Biden authorized a drone strike that the U.S. claimed took out a dangerous cell of ISIS fighters. Biden held up this strike, and another one a day earlier, as evidence of his commitment to take the fight to the terrorists in Afghanistan. But the Kabul strike, which targeted a white Toyota Corolla, did not kill any members of ISIS. The victims were 10 civilians, seven of them children. The driver of the car, Zemari Ahmadi, was a respected employee of a U.S. aid organization. Following a New York Times investigation that fully exposed the lie of the U.S. version of events, the Pentagon and the White House admitted that they had killed innocent civilians, calling it “a horrible tragedy of war.” This week, the Pentagon released a summary of its classified review into the attack, which it originally hailed as a “righteous strike” that had thwarted an imminent terror plot. The results were predictable. The report recommended that no personnel be held responsible for the murder of 10 civilians; there was no “criminal negligence,” as the report put it. Daniel Hale, a military veteran who pleaded guilty to disclosing classified documents that exposed lethal weaknesses in the drone program, is serving four years in prison. Hale’s documents exposed how as many as nine out of 10 victims of U.S. drone strikes in Afghanistan were not the intended targets. In Biden’s recent drone strike, 10 of 10 were innocent civilians.

Note: For more along these lines, see concise summaries of deeply revealing news articles on government corruption and war from reliable major media sources.